Generating Synthetic Driving Datasets That Don't Exist Yet

There are amazing real-world driving datasets out there (nuScenes, Waymo Open, KITTI360). Teams drove cars with expensive sensor rigs through cities, recorded everything, and painstakingly labeled it all.

But you’re stuck with whatever cameras were on that specific car. nuScenes has six cameras at 90° field of view. Waymo has five at 60°. Want to train your model with four cameras at 120°? Or test what happens when you remove one camera entirely? You can’t. The data doesn’t exist.

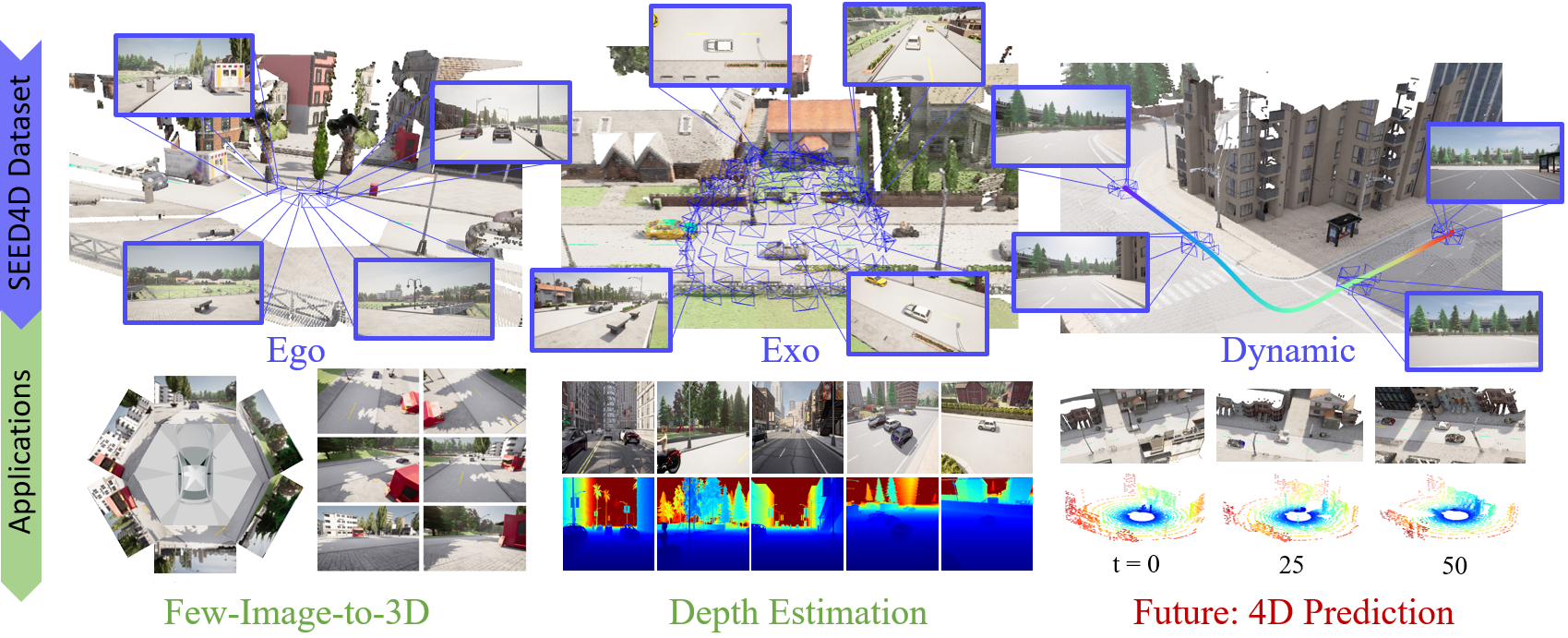

This was a real problem when building 6Img-to-3D. We needed driving data with flexible camera setups, perfect depth information, and views from outside the car too, not just from the roof cameras. No real dataset offers that. So together with Marius Kästingschäfer, Sebastian Bernhard, Dylan Campbell, Eldar Insafutdinov, Eyvaz Najafli, and Thomas Brox, we built SEED4D, a tool that generates exactly the driving data you need using the CARLA simulator.

Published at WACV 2025. Paper on arXiv.

What You Get

You pick a city, weather, time of day, and camera setup. SEED4D fires up CARLA, drops a car into the scene with traffic and pedestrians, and captures everything:

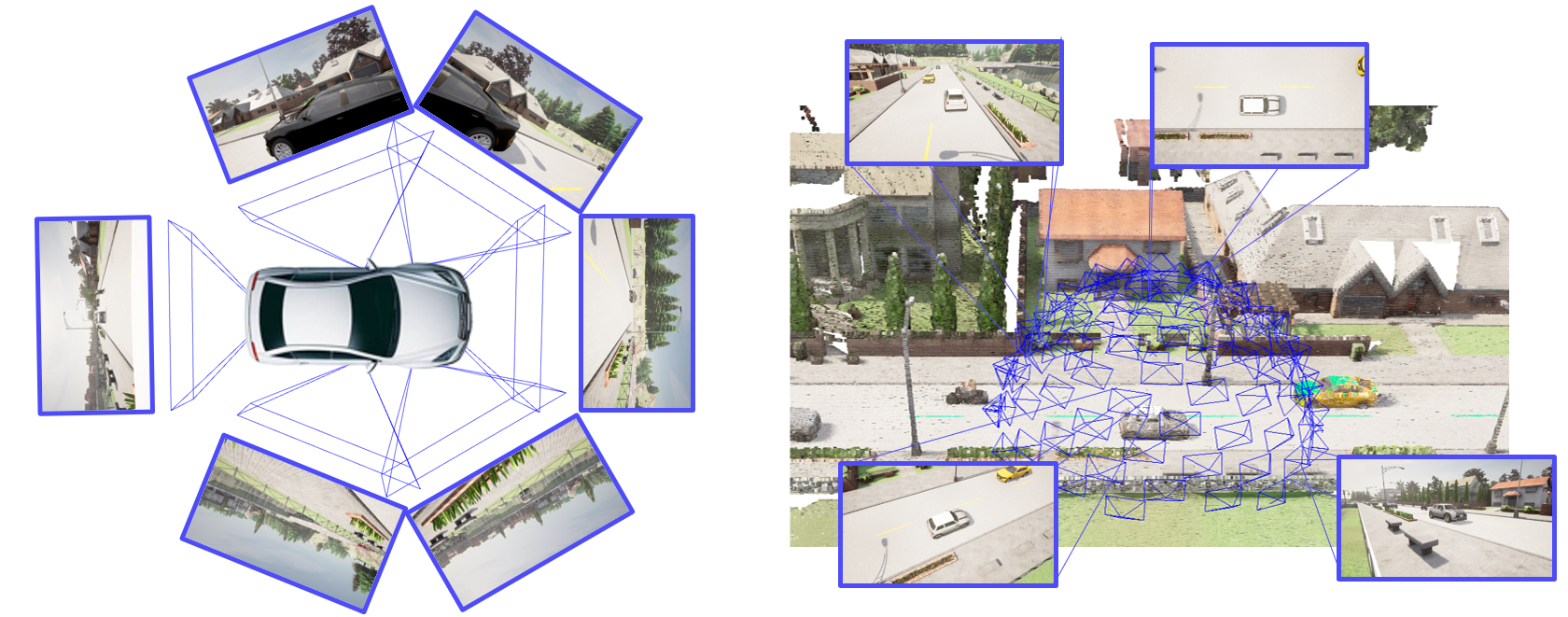

- On-car views: cameras mounted on the vehicle, just like a real self-driving car. You can mimic nuScenes, Waymo, KITTI360, or design your own rig.

- Outside views: cameras placed around the scene, looking at the car from the outside. Like having a drone fleet hovering around your car at every moment.

- All the extras: depth maps, segmentation masks, LiDAR point clouds, 3D bounding boxes, exact camera positions.

- Time: multi-step sequences where traffic moves, pedestrians walk, and the scene evolves. The output separates static background from moving objects, so you can train models that understand which parts of a scene are permanent and which are dynamic.

The output is plug-and-play with Nerfstudio, no conversion scripts needed.

Design Any Camera Rig

Instead of being locked into one fixed setup, you describe your cameras in a simple JSON file: where each one sits on the car, which direction it points, its field of view, and its resolution. Every camera can be different.

We ship presets that match the big-name datasets (nuScenes, Waymo, KITTI360), but you can go beyond them. Want to test how your model handles a cheap 3-camera setup? Or a weird asymmetric rig? Just edit the config and regenerate.

There’s also a random camera generator for training models that need to handle any setup: random positions, orientations, and fields of view, anywhere from 1 to 6 cameras. Combined with a batch config generator, you can produce hundreds of diverse setups across all of CARLA’s towns and weather presets.

This flexibility is what made 6Img-to-3D possible; we could train with variable camera configurations, something you simply can’t do with fixed real-world datasets.

The Inside-Outside Trick

SEED4D captures views from the car and from outside the car at the same time, in the same scene. This is what makes it different from other synthetic data tools.

Say you’re building a model that takes six car-mounted photos and tries to understand the full 3D scene. How do you test if it actually works? You need ground truth images from viewpoints the car cameras never saw: from above, from across the street, from behind a building. We place 100 external cameras on an evenly-spaced sphere around the car, all looking inward. It’s the kind of paired data that’s impossible to collect in the real world; you’d need to stop traffic and fly drones around every car.

The Scale

The datasets we released with the paper:

- Static dataset: 2,000 scenes, 212K images, across 8 different towns and various weather conditions. 437 GB total.

- Dynamic dataset: 10,500 trajectories with moving traffic, 16.8 million images. 1.6 TB total.

All generated on a handful of GPUs over a few days. After profiling and fixing bottlenecks (slow PNG encoder, sequential disk writes, wasteful polling loops) and adding parallel processing across multiple CARLA instances, generation got roughly 4x faster. A browser-based UI handles config building (visual camera placement on a 3D car model), job monitoring, and data browsing with an interactive 3D viewer.

The paper is on arXiv and the code is open source at github.com/tgieruc/seed4d.

SEED4D: A Synthetic Ego-Exo Dynamic 4D Data Generator, Driving Dataset and Benchmark. Published at WACV 2025.